01 Oct 2019

GENESIS: Generative Scene Inference and Sampling with Object-Centric Latent Representations

GENESIS: Generative Scene Inference and Sampling with Object-Centric Latent Representations

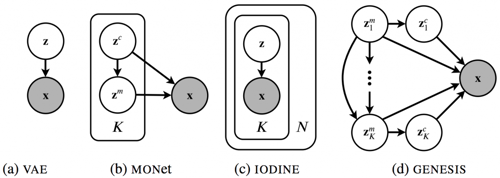

Generative latent-variable models are emerging as promising tools in robotics and reinforcement learning. Yet, even though tasks in these domains typically involve distinct objects, most state-of-the-art generative models do not explicitly capture the compositional nature of visual scenes. Two recent exceptions, MONet and IODINE, decompose scenes into objects in an unsupervised fashion. Their underlying generative processes, however, do not account for component interactions. Hence, neither of them allows for principled sampling of novel scenes. Here we present GENESIS, the first object-centric generative model of 3D visual scenes capable of both decomposing and generating scenes by capturing relationships between scene components. GENESIS parameterises a spatial GMM over images which is decoded from a set of object-centric latent variables that are either inferred sequentially in an amortised fashion or sampled from an autoregressive prior. We train GENESIS on several publicly available datasets and evaluate its performance on scene generation, decomposition, and semi-supervised learning.

Graphical model of Genesis compared to related methods. Images x are encoded by latent variables z. Object-centric models partition z into K components. N denotes the number of iterative refinement operations in Iodine. Unlike MONet and Iodine, Genesis captures relations between the K component latents in an autoregressive fashion.

![[PDF]](https://ori.ox.ac.uk/wp-content/plugins/papercite/img/pdf.png) M. Engelcke, A. R. Kosiorek, O. Parker Jones, and I. Posner, “GENESIS: Generative Scene Inference and Sampling of Object-Centric Latent Representations,” International Conference on Learning Representations (ICLR), 2020.

M. Engelcke, A. R. Kosiorek, O. Parker Jones, and I. Posner, “GENESIS: Generative Scene Inference and Sampling of Object-Centric Latent Representations,” International Conference on Learning Representations (ICLR), 2020.